- /

- /

- /

Factors Affecting Accuracy in Photogrammetry

PhotoModeler is based on the science of Photogrammetry, which is defined as “measurement from photographs”. There are several factors that determine the accuracy of a photogrammetric project.

One of the most important concepts is that you can change the accuracy of a photogrammetry project by changing how the project is done. That is, you can change some factors in your project to increase its accuracy.

This article refers mostly to ‘relative accuracy’ and ‘precision’. To learn more about relative accuracy vs absolute accuracy vs precision read this knowledge base article.

While the factors described here affect all types of photogrammetry projects (manually-marked, coded-targets, SmartMatch, UAV/drone, etc.), the way these factors affect accuracy depends on the project type. For example, with automation in SmartMatch and UAV projects, you can play off taking many more photos (the redundancy factor) against the typical low angles between photos. Hence, one should not be overly concerned with the lower angles found in SmartMatch and UAV projects.

The main factors affecting accuracy are:

- Photo Resolution

- Camera Calibration

- Angles

- Photo Orientation Quality

- Photo Redundancy

- Targets / Marking Precision

Description of Factors

Photo Resolution: The number of pixels in the image. The higher the resolution of the images, the better chance of achieving high accuracy because items can be more precisely located.

Camera Calibration: Calibration is the process of determining the camera’s focal length, format size, principal point, and lens distortion. There are several ways of obtaining this camera information and using a camera that is well calibrated in PhotoModeler will give the best results. Associated with this are two sub-factors: a) certain cameras do not calibrate that well (very wide angle or fish-eye lenses), and b) certain cameras are unstable (calibration changes) – in both cases accuracy will be lower. Conversely, a high-quality lens and stable camera will produce better results.

Angles: Points and objects that appear only on photographs with very low subtended angles (for example a point appears in only two photographs that were taken very close to each other) have much lower accuracy than points on photos that are closer to 90 degrees apart. Making sure the camera positions have good spread will provide the best results. For projects that have low angles between photos such as UAV and SmartMatch projects, it is the total angle across multiple photos that affect a point’s accuracy (for example if a point was marked on 5 photos, it is the angle between the pair of photos with greatest angle difference).

Photo Orientation Quality: During processing, PhotoModeler computes the location and angle of the camera for each photo – this is called Orientation. The orientation quality improves as the number of well-positioned points increases and as the points cover a greater percentage of the photograph area.

Photo Redundancy: A point’s or object’s position is usually more accurately computed when it appears on many photographs – rather than the minimum two photographs. For a given marking precision, more photos ‘average’ that error to provide higher output accuracy. As well, a point that is precisely marked on several photos can compensate for one mark that is not precise.

Targets / Marking Precision: The accuracy of a 3D point is tied to the precision of its locations in the images. This image positioning can be improved by using targets. PhotoModeler uses the image data to sub-pixel mark the point and this increases the precision of its placement and hence the overall accuracy of the point’s computed 3D location.

Photogrammetry Accuracy Factors

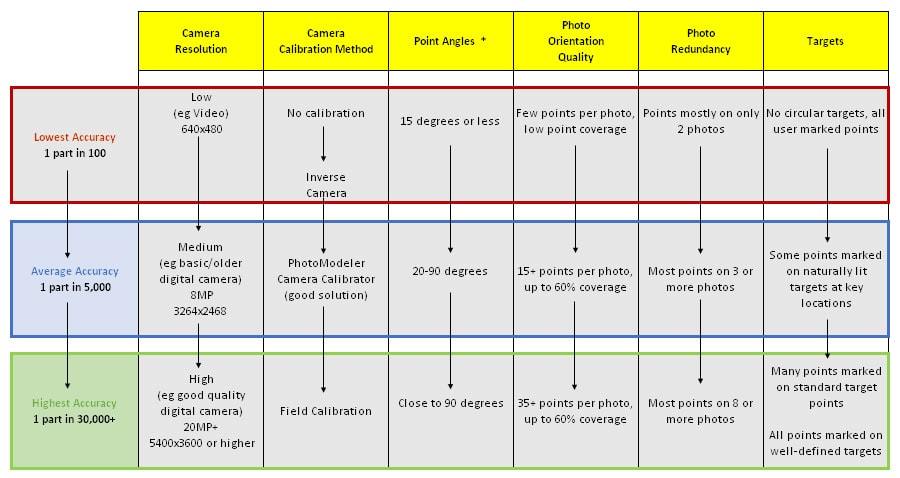

To see how the various combinations of these factors contribute to project accuracy, please review the table below.

* Point angle is the maximum angle of the light rays that image a point. E.g. a point might appear on 4 photos that are all 10 degrees apart – the maximum angle for that point is 40 degrees. Point angle becomes more important when a point is imaged on only 2 photos that are low angle.

Moving down each column one gets increasing accuracy, given the other items remain constant.

For example, the lowest accuracy with PhotoModeler is achieved with a low-resolution, uncalibrated camera, low angles between photos, low coverage of every photo, marks only on two photos, and no subpixel targets.

Conversely, the highest accuracy is achieved with a high-resolution field calibrated camera, with strong angles between photos, most photos have good coverage, most points appear on 6 or more photos, and all points are targets.

“1 part in NNN” means what accuracy you can get relative to the size of your object or scene. 1 part in 100 means that if your scene (or object) is about 3 m in maximum dimension then the accuracy will be 3m/100 or 3cm. 1 in 5000 means 3m/5000 or 0.6mm. These values are approximate but will give you an idea. Take the largest dimension of your object or scene and divide it by NNN to get your accuracy.

The accuracy figures “1 part in NNN” in this table are the one sigma standard deviation accuracies. At 1 part in 30,000 on a 3m object, point positions would be accurate to 0.1mm at 68% probability (one sigma). This is relative accuracy. To find the absolute accuracy the project must be scaled and or have control points defined. Then the accuracy of these scales and control points affect the absolute accuracy. The higher the accuracy (absolute agreement to a standard or true values) of the scale or the control data, the higher your absolute accuracy (given a constant relative accuracy).

It is hard to get good absolute accuracy without good relative accuracy, so first consider the factors described above.

Point Accuracy vs Project Accuracy

Lastly, the factors above are for both individual points and the overall project accuracy. You can have a highly accurate project (because there is a high-resolution, well-calibrated camera with, on average, the point angles and redundancy are all or mostly good), but a single point could be low accuracy because it has low redundancy and low angle. Even in a good quality project, with all the factor above considered, you still need to be aware that each point in the project meets the criteria as well. This is especially important for key points in your project and points used to define scale and control locations.