How It Works

The Steps

01

take photos from different angles

02

load images into photomodeler software

03

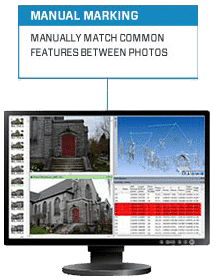

choose the method

04

review, measure, export

The Technology

Regardless of the source of imagery (dslr, mirror-less, or smart phone) the science is the same: light hits an object, reflects, passes through the lens and is captured on the camera’s image sensor or film.

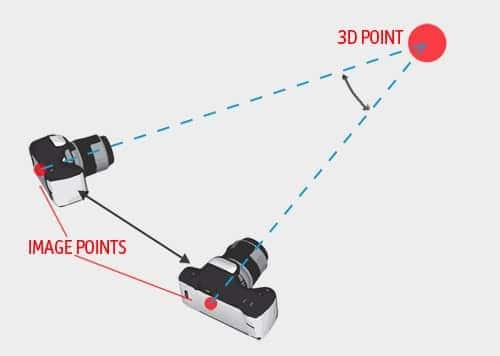

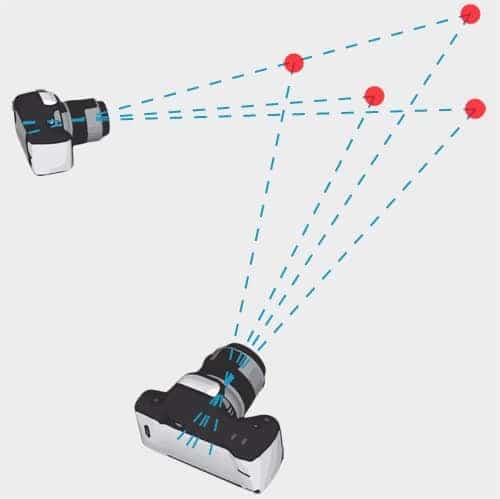

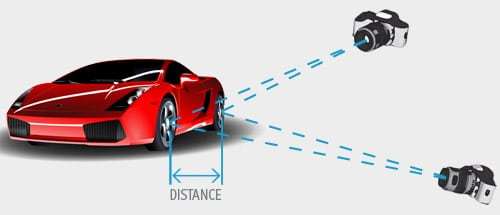

Using multiple photographs, we can compute the position of a point in 3D space by simple geometry if we know: a) where the point is imaged on each photo, b) the parameters of the camera (focal length, lens distortion, etc.) from camera calibration, and c) the relative positions and angles of the camera when the photos were captured.

How do we know the positions and angles of the camera though? When we have the matching locations of multiple points on two or more photos, there is usually just one mathematical solution for where the photos were taken.

Finding the correct position and angles of the camera involves first a rough approximation stage, which must then be refined to achieve higher accuracy. This is handled by a key algorithm in photogrammetry called 'Bundle Adjustment' that takes advantage of the massive processing power of modern computers to adjust the solution until the bundle of light rays between all points and camera positions is optimal.

To get absolute measurement in real units (e.g. "those two points are 6.23cm apart") one last thing is needed - a reference scale. In the picture below the distance could be 10cm, and the object a toy car, or it could be 2.8m and represent a real car. This problem is easily solved by measuring one or more scales (ruler, tape measure, other distance/survey device) and adding that to the photogrammetric project.

What Makes PhotoModeler Special

While the process seems relatively simple, accomplishing this with hundreds of photos and thousands of points is very difficult to do efficiently and with high accuracy. We have been fine tuning the algorithms in PhotoModeler for over 30 years – a claim very few in this industry can make! Our overriding goals for the development of PhotoModeler are user-centered design, high accuracy and high efficiency.

Read how PhotoModeler can help in your application or field, or read more about photogrammetry software in general.